The Decision Architecture Manifesto

You've optimized how you meet. You haven't designed how you decide.

Your organization makes hundreds of decisions a week.

Most of them happen in meetings. And most of those meetings weren’t designed to decide anything.

The machinery that runs those meetings was built for a different problem.

“More output” meant hiring. And hiring isn’t a knob you turn.

You run interviews for weeks. You finally get someone to sign. Then you wait. Best case, you get real yield six weeks in.

More often it’s three to four months before the person is doing work that changes results. And that’s if the first 90 days go well.

So we built guardrails around execution. Pre-meetings, approvals, roadmaps, committees — anything to keep a bad call from turning into a costly quarter.

Now AI makes execution cheap.

But the old machinery is still running, protecting a cost that’s mostly gone. So teams use AI to move faster inside the same loop, and the loop keeps aiming at the same problems.

Cheap execution changes what you can try. Being wrong costs days, not months.

The question isn’t how to protect execution anymore.

It’s which problems you couldn’t afford to chase—until now.

Most companies are treating this shift like a tool rollout. That’s why they plateau.

The Productivity Ceiling

Earlier this month, the Wall Street Journal ran a piece that landed in boardrooms across the country and got filed under the wrong diagnosis.

Consulting firms were being hired to teach employees how to use AI with real budgets. Leadership teams read it as a familiar story: adoption lag, change-management drag, normal deployment pain. Close the gap, usage rises, returns show up.

That reading nailed the symptom. It missed the cause.

The adoption problem wasn’t that employees couldn’t use the tools. It was that no one had changed what the tools were being used toward. The meetings stayed the same. The decisions stayed the same.

The execution got faster. Direction didn’t.

Every org chart, every meeting cadence, every weekly sync and quarterly offsite was designed to do one thing.

Coordinate human execution.

Status checks. Handoffs. Follow-through. The messy middle between “we decided to do this” and “this is done.”

Work about work.

That was the correct design for a long time. Humans executing in parallel are inconsistent, territorial, and slow.

Every process, every governance structure, every standing meeting answers a version of the same question: how do we get humans to do this thing reliably, at scale, across time?

AI just made the design spec obsolete.

The Messy Middle Just Became AI’s Job

An agentic system handles execution coordination at a different order of magnitude.

What needed a weekly sync, agents handle continuously. What needed a daily standup, agents track and surface as it happens. What needed a monthly review, agents aggregate and flag in real time.

Status chasing, handoffs, follow-through, anomaly detection, progress tracking, document synthesis, action item routing: these are now machine jobs. AI does them in the minutes between your conversations, rather than requiring your hours to do them at all.

Teams that use AI to do work about work faster get a bump. Teams that remove work about work from the human calendar get something else: time back for work no agent can do.

If AI can coordinate what your team currently meets to coordinate, every hour spent in those meetings is an hour not spent on the part that still needs judgment. You’re paying attention for work that’s already cheap.

Forget “How do we run better meetings?”

If AI handles coordination, what are meetings for?

Your New Job: Decide What’s True

When you pull execution coordination out of the agenda, one category of work remains: judgement.

Judgment #1: Staying in sync with Your Customer

Your business moves as your customer moves. AI doesn’t change that reality, but AI executes against a snapshot. The meeting is where you verify the snapshot is still accurate.

Is what we know about the customer still true?

Is the problem we’re solving still the problem they have?

Are the agents executing toward the right destination, or executing precisely toward the wrong one?

This is not strategic planning. It’s calibration. The weather service doesn’t redesign its forecasting architecture every week. It updates the inputs.

Judgment #2: Writing Specs AI Can Run

The quality of that execution is bounded by the quality of the context they carry.

The meeting is where you ask:

Do the agents have what they need to keep firing?

What changed this week that they don’t know?

What decision got made in one conversation that hasn’t been encoded into the execution cycle?

Judgment #3: Find the Next Problem

This is the one that determines whether an organization compounds or flatlines. The execution engine is running against today’s problem set.

New problems, emerging customer signals, opportunities that don’t fit the current model — none of this gets surfaced by agents. It gets surfaced in human conversation.

The meeting is where you ask: what are we not going after yet that we should be?

Three jobs. One governing logic.

The meeting keeps the execution engine calibrated to reality.

Agents handle the rest: status updates, progress reviews, escalation chains, resource debates. The meeting is for what agents cannot do: maintain the sync between what’s happening in the world and what the execution layer is running toward.

The Context Engine

Same company. Same leadership team. Same agenda item: a market expansion decision. Sales has surfaced a new segment. The question: pursue now or wait.

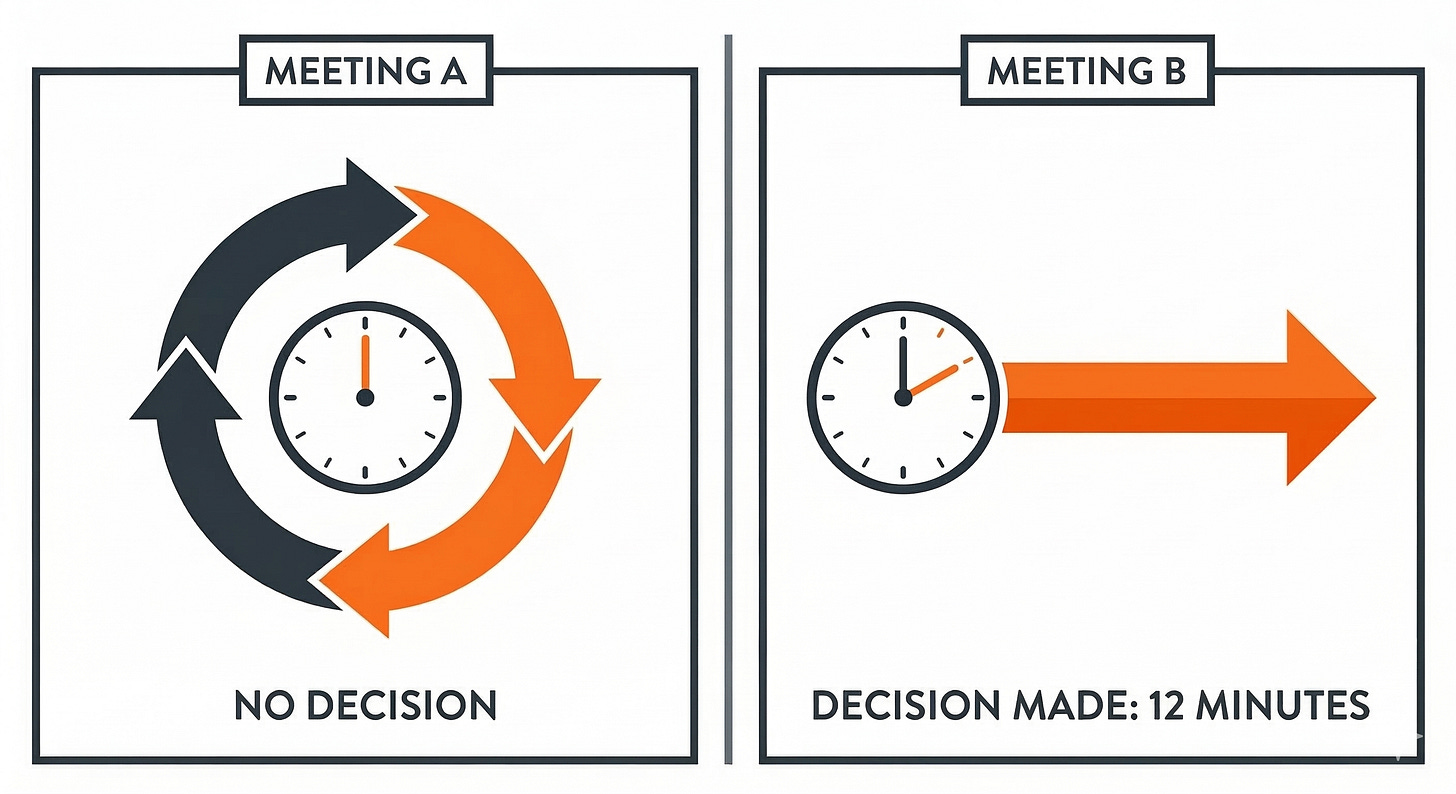

Meeting A.

Sixty minutes. The deck runs forty. Three slides on market size, two on competitive landscape, one on projected revenue. The team debates timeline.

A resource conflict surfaces: engineering is committed through Q3. Someone proposes a task force. The meeting ends with a follow-up and an action item to “gather more data.”

The AI writes four bullet points. Accurate. A clean record of an inconclusive conversation.

Two weeks later, the follow-up runs fifty minutes. The same resource conflict. The data is gathered.

No decision.

Meeting B.

Same sixty minutes. But forty-eight hours before the meeting, three questions go out:

What do we know about this segment’s core problem?

What would we need to believe to expand now rather than wait?

What dependency has to move first?

People show up having opinions.

In the first fifteen minutes, the real constraint appears: not the engineering timeline, but an unresolved question about whether the segment’s buying motion fits the current sales model. Nobody had named it. It sat under the resource debate the whole time.

Once named, the decision takes twelve minutes.

The AI receives a decision, a rationale, and three context updates to encode for the agents running the expansion work. The execution cycle starts that day.

The difference isn’t discipline or facilitation. It’s context design.

Before a meeting fires, ask:

What does the decision-maker need to believe, and what do they need to know, for this conversation to produce calibration instead of recirculation?

If you can’t answer that before the invite goes out, the meeting isn’t ready.

Every Meeting Is Either Building or Burning

Meetings have two legitimate outputs:

Build the context that makes a decision possible.

Produce the decision.

If a meeting does neither, it’s a pure loss—because the machine still runs, just on old assumptions.

So be honest about the product.

A coordination meeting produces assignments: who’s doing what, by when, with which resources. If agents can generate that automatically, human time spent producing it is pure waste.

A decision meeting produces a commitment: what we’re doing, why, what we’re not doing, and what changes downstream.

A context-building meeting produces the inputs that make that commitment possible: what’s true, what changed, what we believe, what we need to learn, and what must be settled to decide.

If the meeting builds context or produces a decision, the time was spent. If it does neither, the pipe just helps you execute faster on yesterday's context.

There is no neutral meeting.

Every hour spent in a room where the real question stays buried is an hour where the system gets no update. The execution layer keeps running on yesterday’s picture of the world.

Compounding works both ways.

Meetings that build context leave behind a sharper map: what’s true about the customer, the market, and the constraints. The next decision starts half-made.

Meetings that avoid both context and decision leave behind a log. And logs don’t move the business.

Most organizations have spent years optimizing how they meet. Almost none have designed how they decide.

Decision Architecture—The Design of Organizational Judgment

The companies that keep gaining from AI over the next three years won’t be separated by model choice or agent stack complexity.

They’ll be separated by judgment.

Decision architecture is the design of organizational judgment: how a company decides what’s true, what matters, what to do next, and what to ignore.

This work has always existed. It just didn’t have enough space. When execution was slow and expensive, judgment got squeezed between coordination, approvals, and the drag of getting humans to move together.

Agents change the economics. Execution gets cheap. The constraint moves upstream.

When a machine can carry work forward nonstop, the quality of the business depends on the quality of the calls that aim it. Agents don’t fix judgment. They amplify it. Clean judgment turns into compounding. Sloppy judgment turns into fast, consistent mistakes.

Decision architecture is the system that keeps judgment usable at scale.

It determines who decides what, what good context looks like before a call gets made, and how a decision changes what the execution layer does tomorrow.

Strategy still lives upstream. But this is the daily work of keeping a powerful execution layer pointed at the right problems, with current inputs, under clear ownership.

Returns are capped by what your organization can decide, and how quickly it can update what it believes.

P.S. Fast decisions aren't fast. They're the last five minutes of slow work most leaders never see. The Context Pyramid maps the levels behind every decision that actually moves.

Read it here - The 5 Level of Slow Work Behind Fast Decisions